Artificial intelligence (AI) tools now exploit smart contracts roughly twice as effectively as they detect vulnerabilities, according to Binance Research.

AI has become a central talking point in the conversation around crypto hacks. Many analysts are increasingly suspecting that attackers are leveraging these tools to pull off DeFi exploits.

Why the AI Offense-Defense Gap Is Widening

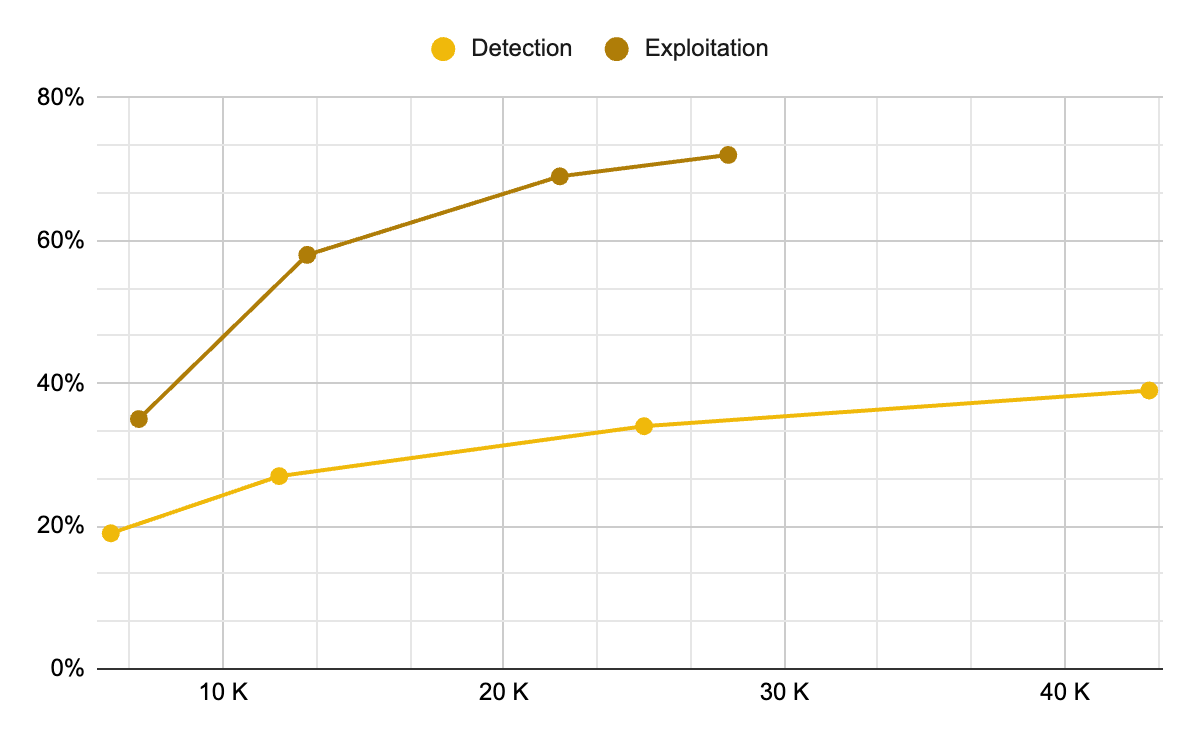

In a recent report, Binance Research noted that GPT-5.3-Codex hits a 72.2% success rate in “exploit” mode on the EVMbench. Meanwhile, its success rate in “detect” mode is roughly half that.

“Whether we welcome it or not, AI is currently 2x better at exploitation than at detection,” the report read. “The economics now favor attackers.”

For context, EVMbench is a benchmark that measures how well AI agents can detect, patch, and exploit high-severity smart contract vulnerabilities. It draws on 117 curated vulnerabilities from 40 audits

Smart contracts hold billions in user funds across decentralized finance (DeFi). Their open-source code makes them ideal targets for automated probing. AI systems can scan thousands of contracts in minutes at marginal cost.

The asymmetry is widening because attack costs are collapsing. Binance Research data shows AI-powered exploits average roughly $1.22 per contract, with that figure projected to fall another 22% every two months.

“Hacken’s SSDLC Maturity Survey shows over 80% of developers now use AI in development, but fewer than 40% use AI for advanced testing — leaving the offense-defense gap structurally lopsided,” Binance Research added.

The threat extends beyond static code. Analysts at TRM Labs have begun speculating that North Korean hackers are integrating AI into their reconnaissance and social engineering operations.

The shift would help explain attacks like Drift, which involved weeks of targeted manipulation of sophisticated blockchain systems, a marked departure from North Korea’s traditional reliance on basic private key compromises.

Follow us on X to get the latest news as it happens

AI Is Reshaping the Economics of Crypto Fraud

The economics of online fraud have also shifted just as dramatically. Chainalysis found that AI-powered scams pull in 4.5 times more money per case than conventional ones and generate nine times the transaction activity.

The firm noted that the spike in transaction volume points to AI helping scammers reach and juggle far more victims at once, a hallmark of fraud being run at an industrial scale.

Scammers are turning to deepfake technology and AI-generated content to craft convincing impersonations for romance and investment cons. Notably, in 2025, impersonation-based attacks alone exploded by 1,400% year-on-year.

Roughly 60% of industry respondents flag rising AI use by criminals as the leading driver of risk exposure in 2025. Crypto, in particular, is bearing the brunt. The sector accounts for 88% of all detected deepfake fraud cases worldwide.

Subscribe to our YouTube channel to watch leaders and journalists provide expert insights

The post AI Is 2x Better at Exploiting Smart Contract Flaws Than Catching Them, Binance Finds appeared first on BeInCrypto.

Security,AI Basics,AI Technology Trends,Editor’s Pick#Exploiting #Smart #Contract #Flaws #Catching #Binance #Finds1777619735